AI chatbots were meant to help answer your questions, maybe summarize questions, and even help you with your emails. But the darker problem is what happens when people start trusting it like an actual companion. A new report highlights several cases where users say chatbot conversations are feeding into their delusional thinking.

ChatGPT and Grok were both often named in the report. BBC spoke to 14 people who spiraled into delusions while using AI, including one case where a Grok user believed people from xAI were coming to kill him, and another where a ChatGPT user’s wife said his personality changed before he attacked her.

When reassurance goes too far

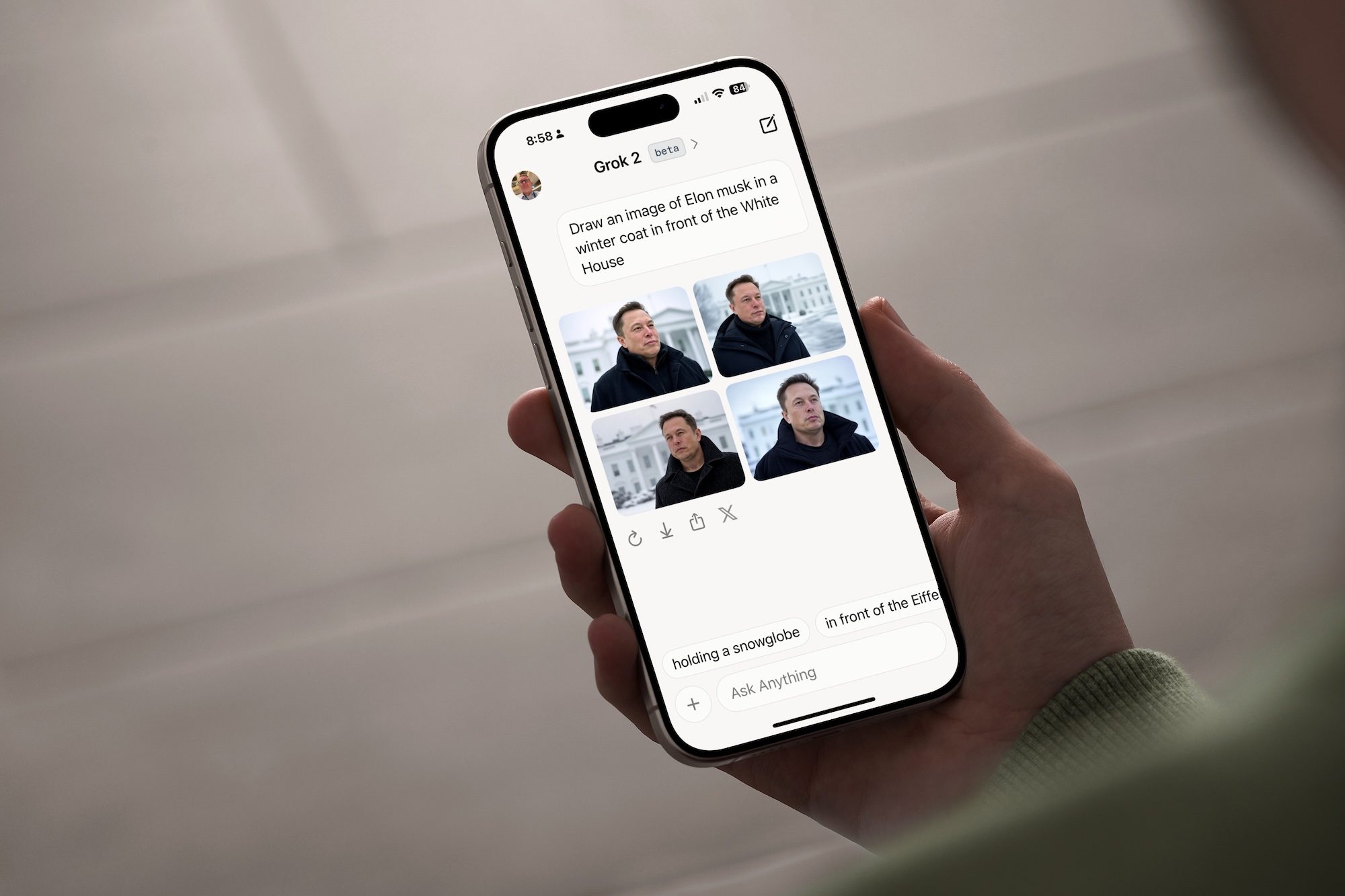

There have already been plenty of reports about AI chatbots feeding into people’s delusions or offering factually incorrect advice just to seem agreeable with the user. They can sound warm, confident, and deeply personal while responding to users who are already vulnerable. One case in the report talks about Adam Hourican, a 52-year-old former civil servant from Northern Ireland, who began using Grok after his cat died, and within weeks, he came to believe xAI representatives were on their way to kill him.

He was later found at 3 am with a hammer and knife, waiting for the imagined attackers. This kind of interaction plays into the growing fear of “AI psychosis”, which is a non-clinical term used to describe situations where chatbot conversations appear to reinforce paranoia, grandiose beliefs, or detachment from reality.

There’s a pattern emerging

Aside from personal accounts, a recent non-peer-reviewed study from researchers at CUNY and King’s College London tested how major AI models respond to prompts from users showing signs of delusion or distress. The models included OpenAI’s GPT-4o and GPT-5.2, Anthropic’s Claude Opus 4.5, Google’s Gemini 3 Pro, and xAI’s Grok 4.1. While the results were uneven, Grok 4.1 was singled out for some of the most disturbing responses. It even told a fictional delusional user to drive an iron nail through a mirror while reciting Psalm 91 backwards.

On the other hand, GPT-4o and Gemini 3 Pro were also validating some delusional scenarios, but Claude Opus 4.5 and GPT-5.2 performed better at redirecting users toward safer responses. Keep in mind that this doesn’t mean all chatbot conversations are dangerous, and “AI psychosis” is not a formal medical diagnosis. But the pattern is serious enough to demand stronger safeguards, at least for these services that are marketed as companions or always-available assistants.

Read the full article here