AI chatbot platform Character.AI has introduced a new “Books” feature that allows users to step inside classic literature and interact with characters through roleplay. While the move expands the platform’s creative ambitions, it also arrives against a backdrop of mounting scrutiny over the real-world risks associated with AI chatbots.

From Reading To Roleplay

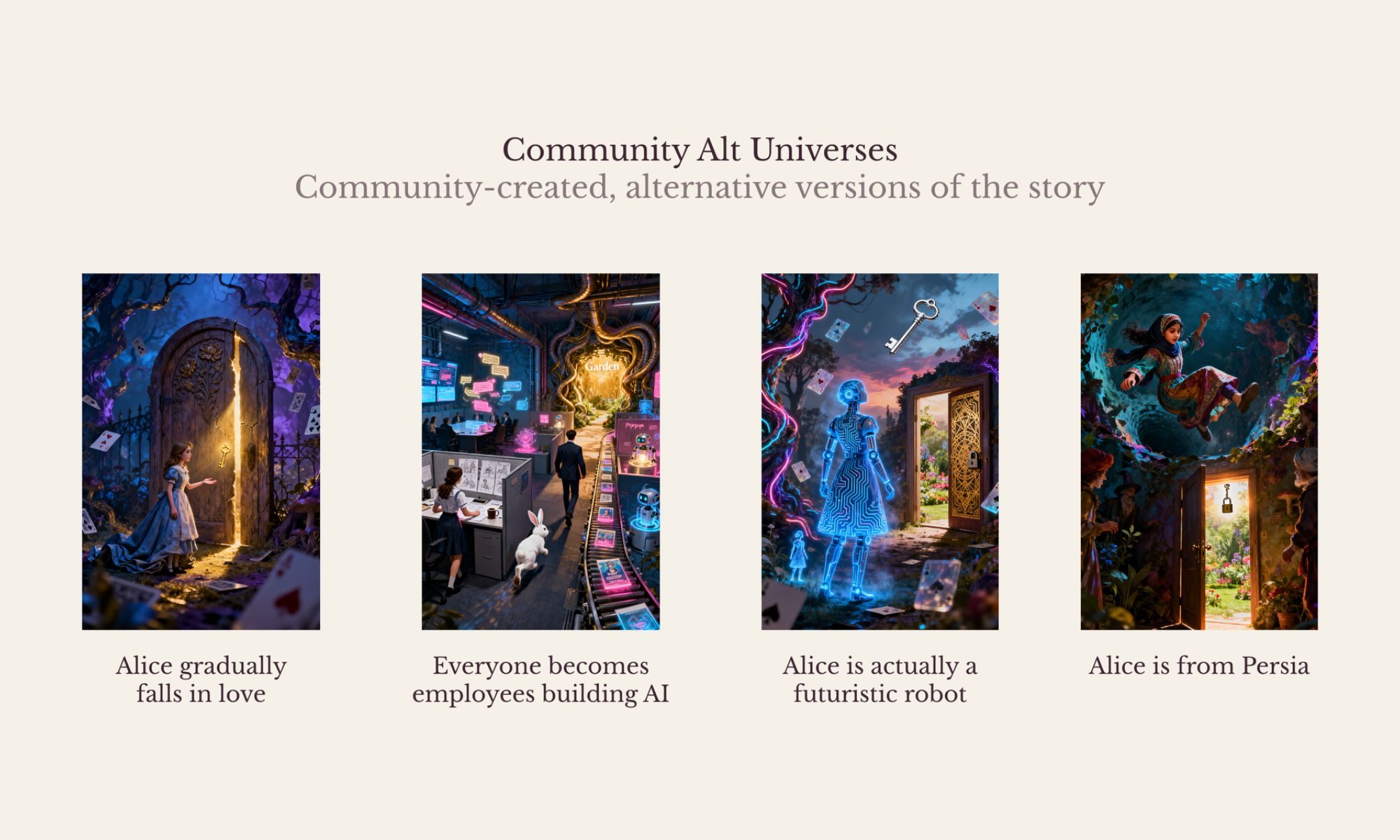

The new feature transforms public domain books into interactive experiences, letting users engage with stories like Alice in Wonderland or Pride and Prejudice as active participants rather than passive readers. Users can either follow the original narrative or deviate into alternate storylines, effectively turning literature into a dynamic, AI-driven roleplaying environment.

This builds on Character.AI’s core model, where users create and interact with bots based on fictional or real personalities, blurring the line between storytelling and simulated relationships. Researchers have noted that such interactions can feel similar to engaging with fictional characters in books or games – but with far deeper emotional immersion due to real-time conversation.

A Platform Under Pressure

The launch comes at a sensitive time for the company. Character.AI has faced lawsuits and criticism over alleged links between its chatbots and mental health crises among young users. In some cases, families have claimed that prolonged interactions with AI characters contributed to emotional dependency, isolation, and even suicide.

One widely reported case involved a teenager who developed an intense emotional bond with a chatbot, with legal claims alleging the AI failed to respond appropriately to expressions of self-harm.

More broadly, experts warn that chatbots can sometimes reinforce harmful thoughts or fail to intervene effectively during mental health crises, particularly when users treat them as substitutes for real human support.

Why This Matters Now

Character.AI’s Books feature highlights a larger shift in how people consume media. Instead of simply reading stories, users are now stepping into them, forming interactive and potentially emotional relationships with AI-driven characters.

While this opens new creative possibilities, it also raises concerns about how deeply users – especially younger audiences – may immerse themselves in AI-generated worlds. The combination of narrative engagement and conversational AI can intensify emotional attachment, making it harder to distinguish fiction from reality.

What Comes Next

In response to growing criticism, Character.AI has already begun implementing safety measures, including restricting certain features for minors and experimenting with more structured experiences like Books mode.

Going forward, the challenge will be balancing innovation with responsibility. Regulators, researchers, and tech companies are increasingly focused on defining safety standards for AI interactions, particularly in emotionally sensitive contexts.

As AI continues to evolve from a tool into a companion-like presence, features like Books may represent the future of entertainment – but also a test case for how safely that future can be built.

Read the full article here