If you have ever played Roblox, you know how wild and unpredictable the platform can get. Now, Roblox is using that same unpredictability as a reason to overhaul how it moderates content.

The platform just rolled out a real-time multimodal AI moderation system that doesn’t just examine individual instances, but scans entire in-game scenes in real-time to catch content that slipped through previous filters.

How does Roblox’s new AI moderation work?

Unlike older moderation tools that evaluate one object at a time, the new multimodal system assesses an entire scene, including avatars, text, and 3D objects, to determine whether the full combination breaks Roblox’s Community Standards.

For example, consider someone using a free-form drawing tool to sketch an offensive symbol — the kind that wouldn’t trigger a single-item check but is obviously a problem in context.

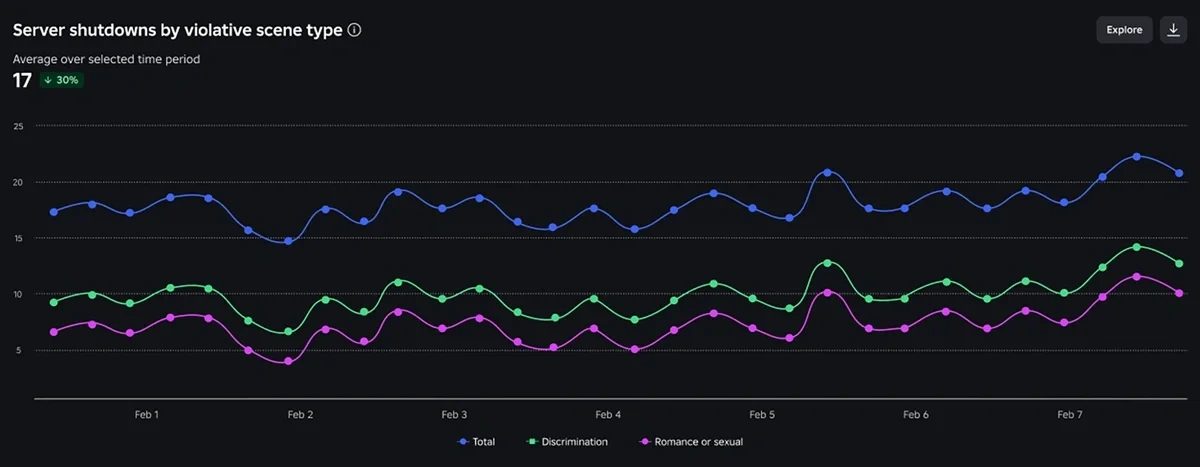

When repeated violations are detected in a single game instance, the system shuts down just that server rather than the entire game. Since launch, roughly 5,000 servers have been pulled down every day.

Roblox is working toward monitoring 100% of playtime with this system. Beyond server shutdowns, it is also developing tools to identify and remove individual bad actors without ruining the experience for everyone else.

What does this mean for Roblox creators?

Creators are not left in the dark either. A new chart inside the existing Creator Dashboard now shows how many of your game servers got shut down on any given day due to bad user behavior.

Spikes in that number can signal a problem worth investigating, giving creators a chance to review and adjust things like custom emotes or in-game building tools before the situation worsens.

A new certification program to clean up online gaming

Roblox is also tackling a larger industry issue. Alongside Keyword Studios and Riot Games, it is co-developing the DLC Leadership Program for online community managers and moderators.

Research psychologist Rachel Kowert, who serves as Research Director at Games for Change, is leading the academic side. The goal is to create standardized, evidence-based training for people managing gaming communities, something the industry has never had before.

Roblox has been also tightening its safety net for a while, from parental controls to age-based chat filters, and the latest update is its most ambitious move yet.

Read the full article here