The battle to keep online spaces safe and inclusive continues to evolve.

As digital platforms multiply and user-generated content expands very quickly, the need for effective harmful content detection becomes paramount. What once relied solely on the diligence of human moderators has given way to agile, AI-powered tools reshaping how communities and organisations manage toxic behaviours in words and visuals.

From moderators to machines: A brief history

Early days of content moderation saw human teams tasked with combing through vast amounts of user-submitted materials – flagging hate speech, misinformation, explicit content, and manipulated images.

While human insight brought valuable context and empathy, the sheer volume of submissions naturally outstripped what manual oversight could manage. Burnout among moderators also raised serious concerns. The result was delayed interventions, inconsistent judgment, and myriad harmful messages left unchecked.

The rise of automated detection

To address scale and consistency, early stages of automated detection software surfaced – chiefly, keyword filters and naïve algorithms. These could scan quickly for certain banned terms or suspicious phrases, offering some respite for moderation teams.

However, contextless automation brought new challenges: benign messages were sometimes mistaken for malicious ones due to crude word-matching, and evolving slang frequently bypassed protection.

AI and the next frontier in harmful content detection

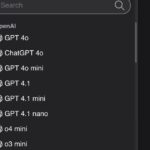

Artificial intelligence changed this field. Using deep learning, machine learning, and neural networks, AI-powered systems now process vast and diverse streams of data with previously impossible nuance.

Rather than just flagging keywords, algorithms can detect intent, tone, and emergent abuse patterns.

Textual harmful content detection

Among the most pressing concerns are harmful or abusive messages on social networks, forums, and chats.

Modern solutions, like the AI-powered hate speech detector developed by Vinish Kapoor, demonstrate how free, online tools have democratised access to reliable content moderation.

The platform allows anyone to analyse a string of text for hate speech, harassment, violence, and other manifestations of online toxicity instantly – without technical know-how, subscriptions, or concern for privacy breaches. Such a detector moves beyond outdated keyword alarms by evaluating semantic meaning and context, so reducing false positives and highlighting sophisticated or coded abusive language drastically. The detection process adapts as internet linguistics evolve.

Ensuring visual authenticity: AI in image review

It’s not just text that requires vigilance. Images, widely shared on news feeds and messaging apps, pose unique risks: manipulated visuals often aim to misguide audiences or propagate conflict.

AI-creators now offer robust tools for image anomaly detection. Here, AI algorithms scan for inconsistencies like noise patterns, flawed shadows, distorted perspective, or mismatches between content layers – common signals of editing or manufacture.

The offerings stand out not only for accuracy but for sheer accessibility. Their completely free resources, overcome lack of technical requirements, and offer a privacy-centric approach that allows hobbyists, journalists, educators, and analysts to safeguard image integrity with remarkable simplicity.

Benefits of contemporary AI-powered detection tools

Modern AI solutions introduce vital advantages into the field:

- Instant analysis at scale: Millions of messages and media items can be scrutinized in seconds, vastly outpacing human moderation speeds.

- Contextual accuracy: By examining intent and latent meaning, AI-based content moderation vastly reduces wrongful flagging and adapts to shifting online trends.

- Data privacy assurance: With tools promising that neither text nor images are stored, users can check sensitive materials confidently.

- User-friendliness: Many tools require nothing more than scrolling to a website and pasting in text or uploading an image.

The evolution continues: What’s next for harmful content detection?

The future of digital safety likely hinges on greater collaboration between intelligent automation and skilled human input.

As AI models learn from more nuanced examples, their ability to curb emergent forms of harm will expand. Yet human oversight remains essential for sensitive cases demanding empathy, ethics, and social understanding.

With open, free solutions widely available and enhanced by privacy-first models, everyone from educators to business owners now possesses the tools to protect digital exchanges at scale – whether safeguarding group chats, user forums, comment threads, or email chains.

Conclusion

Harmful content detection has evolved dramatically – from slow, error-prone manual reviews to instantaneous, sophisticated, and privacy-conscious AI.

Today’s innovations strike a balance between broad coverage, real-time intervention, and accessibility, reinforcing the idea that safer, more positive digital environments are in everyone’s reach – no matter their technical background or budget.

(Image source: Pexels)

Read the full article here