At the SAP Center this week, CEO Jensen Huang stood before a capacity crowd to signal a fundamental shift in how we live with technology. We are moving away from simple text prompts and toward a world where AI acts, moves, and even leaves the planet.

After two decades of building the brains, the company is now fully focused on giving AI a body and the hardware to run it all.

Below are our five biggest takeaways from this year’s event.

The arrival of ‘Vera Rubin’ and the space frontier

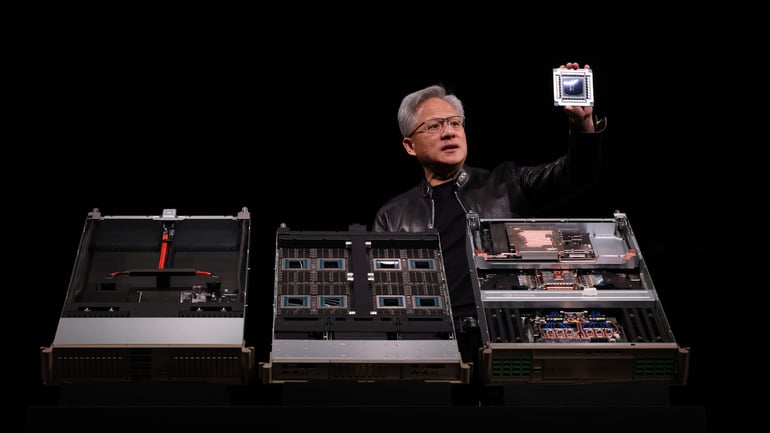

Nvidia isn’t just building chips anymore. They are building entire ecosystems. The star of the show was Vera Rubin, a new computing platform that integrates seven different chips into one giant, vertically optimized system.

Huang was clear about why this “full-stack” approach matters, stating during the keynote: “When we think Vera Rubin, we think the entire system, vertically integrated, complete with software, extended end to end, optimized as one giant system.”

But the ambition doesn’t stop on the ground. Nvidia announced Space-1 Vera Rubin, a design intended to take AI data centers into orbit. Named after the astronomer who discovered dark matter, this architecture is literally reaching for the stars to bring accelerated computing to space exploration.

The era of the claw (Agentic AI)

The most human shift at GTC 2026 is the transition to autonomous agents, or “claws.” Unlike the chatbots we use today that wait for a command, these are long-running systems that can reason, plan, and execute tasks over days without someone holding their hand.

A major highlight was OpenClaw, an open-source project that Huang called “the most popular open source project in the history of humanity,” and referred to as the next ChatGPT.

To help companies use this safely, Nvidia introduced NemoClaw, a stack that adds guardrails and privacy routing to these agents.

“OpenClaw has open sourced the operating system of agentic computers… Now, OpenClaw has made it possible for us to create personal agents,” Huang said.

Supercomputing for the desk

The DGX Station GB300 arrived at GTC not just as a product announcement, but as a symbol of a shift happening in how serious AI development gets done.

Powered by the GB300 Grace Blackwell Ultra Desktop Superchip, the system packs 748 gigabytes of coherent memory and up to 20 petaflops of AI compute into a deskside form factor. It can run open models up to one trillion parameters, frontier AI work, from a desk.

The first unit was delivered on March 6 to Andrej Karpathy, founding member of OpenAI, at his home in Palo Alto. More deliveries followed. The point Nvidia is making: long-running autonomous agents need serious, always-available compute, and for some developers, that compute is now coming home.

Physical AI got a major expansion

Huang spent significant time on physical AI, the push to bring Nvidia’s models and simulation tools into machines operating in the real world.

On the automotive side, new robotaxi partners include BYD, Hyundai, Nissan and Geely, with Uber announced as a deployment partner for its ride-hailing network. In healthcare, Nvidia launched what it describes as the first domain-specific physical AI platform for surgical robotics, including:

- Open-H: The world’s largest healthcare robotics dataset, built with 35 collaborators and covering 776 hours of surgical video.

- Cosmos-H: A model family for generating synthetic surgical data and evaluating robotic policies.

- GR00T-H: A vision language action model that processes clinical task descriptions and generates motion commands for surgical robots.

Johnson & Johnson MedTech, CMR Surgical and Moon Surgical are among the first adopters. Meanwhile, NVIDIA IGX Thor is now generally available, with adoption ranging from Caterpillar’s in-cabin AI assistant to Johnson & Johnson’s surgical platform to Planet Labs processing satellite data in orbit.

The $1 trillion revenue horizon

The scale of this shift is reflected in the numbers. Huang noted that the demand for GPUs has skyrocketed, leading him to predict massive economic growth for the company over the next few years.

Highlighting the sheer volume of this boom, Huang said: “I believe computing demand has increased by 1 million times over the last few years.”

As a result, he now expects at least $1 trillion in revenue between 2025 and 2027, driven by what he calls the “five-layer cake of artificial intelligence.”

The infrastructure numbers announced at GTC were also hard to ignore.

AWS will deploy more than 1 million Nvidia GPUs, spanning Blackwell and Rubin architectures, RTX PRO Blackwell Server Edition GPUs and Groq 3 LPUs, beginning this year across its global regions.

Microsoft Azure was named the first hyperscale cloud provider to power up the new NVIDIA Vera Rubin NVL72 systems, after having deployed hundreds of thousands of liquid-cooled Grace Blackwell GPUs across its data centers in under a year.

NVIDIA Cloud Partners have now doubled their AI factory footprint year over year, collectively deploying more than one million GPUs representing over 1.7 gigawatts of AI capacity worldwide.

Nvidia is racing to build AI data centers across Asia while open-sourcing key tech, setting up a high-stakes showdown with China in the global AI infrastructure boom.

Read the full article here